Google Trends — notes on data use in a forensic context.

In light of recent media coverage, you will find here a comprehensive, factual outline of my methodology.

The invisible record — how thoughts become search queries

Google acts as a cognitive extension of the human mind. In the digital age, the preparation of criminal acts is also increasingly done through search queries. Information can be extracted from 1.1 billion websites in a targeted manner — quickly, anonymously and with broad reach.

The tight coupling between human and machine leaves traces — often unconsciously and unintentionally. In digital investigations, even incidental queries can provide leads that, under certain technical and legal conditions, can be made visible after the fact.

Google Search & Google Trends

Google Search

Google holds a 79% market share for desktop searches worldwide and around 94% on mobile devices. Usage varies considerably from country to country. Worldwide, Google answers around 40,000 search queries per second.

Google Trends

Google Trends is an analysis tool that lets queries be visualized temporally and geographically. It also surfaces queries with a similar development in search behavior. Originally designed for digital marketing, it enables the identification of needs and better planning of marketing activities.

Properties of the data

Any interpretation must begin with an understanding of the data structure. Google Trends imposes specific technical and legal constraints that must be taken into account for a robust evaluation.

Live vs. historical

Google Trends distinguishes between live data (7-day look-back with high temporal resolution) and historical data (back to 2004, less detailed and barely usable in cases of low demand).

Privacy threshold

To protect personal data, Google Trends only considers queries about persons of public interest. A certain volume of independent queries must be registered before any search trajectory is shown.

Relative values

Google Trends does not provide absolute values. They are plotted on a scale of 0–100% within the selected time frame. Several search phrases can be compared over time — useful for rough order-of-magnitude estimates.

Formulation & matching

Google Trends distinguishes between regular search (different orderings and context-related additions) and exact match (only the exact spelling within explicit quotation marks).

Geographic attribution

Origin is inferred from the IP address or the location of the mobile device. The location can be obscured via VPN or other techniques. In analytical practice, the willingness to obfuscate depends on the type of search term, the perpetrator group and the location.

Signal, noise and cross-validation

Single signals are not meaningful on their own. Only repeated checks and the layering of independent data threads produce a robust picture — and make valid leads distinguishable from artefacts.

Strong and weak signatures

Google Trends was designed for large data volumes. Its system architecture takes only samples and fills missing ranges with computed probabilities. This can lead to artefacts — apparent queries that were never made. Queries with very low search volume must be critically scrutinized; results are not temporally stable and can be interpreted as digital noise. Queries must be checked multiple times and cross-validated over time.

"If a search term has only a very low search volume in the period under review, small deviations can occur even within closed time frames." — Isabelle Sonnenfeld

Cross-validation

Analyzing individual queries is like looking at a fragment. Only when several seemingly unrelated parts are layered over time — like transparent slides under the beam of a focused lamp — does a meaningful overall picture emerge. A consistent rise in different but thematically related queries can be considered a statistically plausible lead. Cross-validation embeds individual signals into broader patterns and helps distinguish valid leads from artefacts.

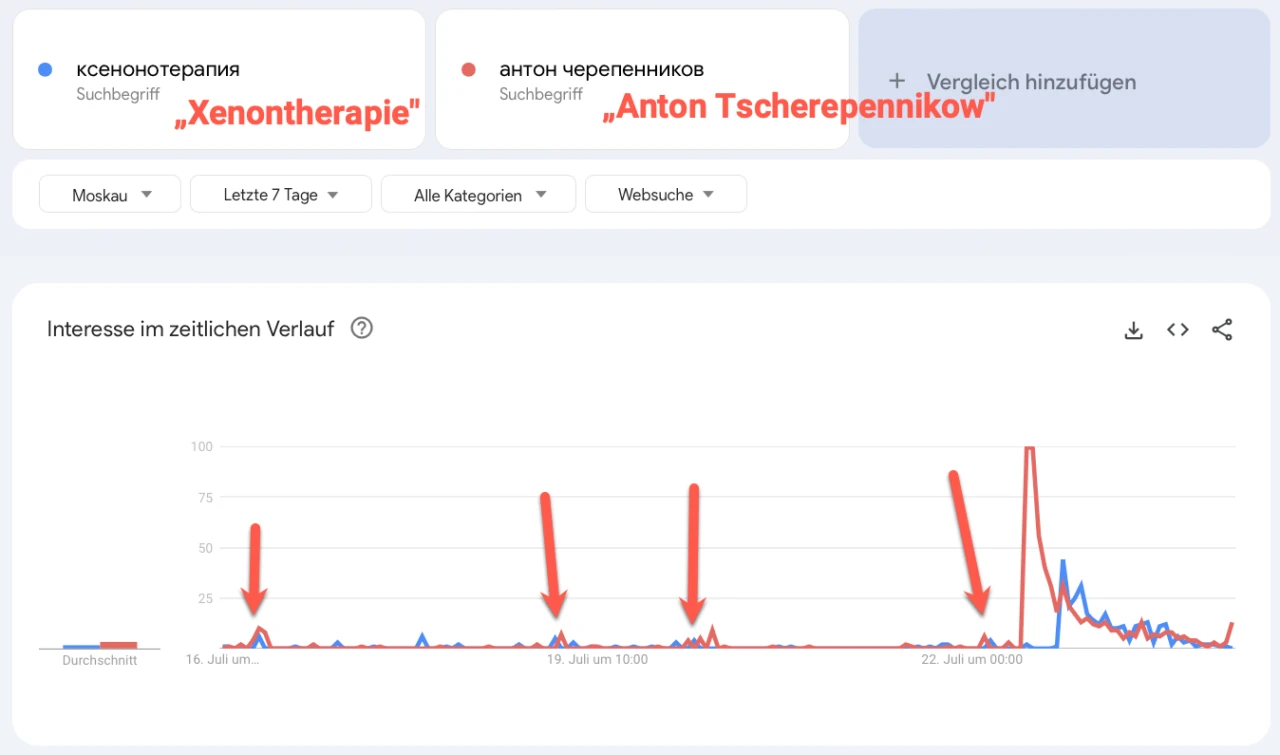

Example: the name "Anton Tscherepennikow" was searched during the same periods as information on xenon therapy. The Russian entrepreneur was later found dead; cause of death was xenon-gas intoxication. In practice, analyses are more often affected by missing than by confabulated data, since the latter can be reduced through cross-validation. Data gaps tend to refute hypotheses about an act rather than support them.

References anchored in reality

Analyses should be supplemented with verifiable real-world references. Data of incontrovertible validity should be matched against facts that cannot be directly tested.

Using the data in investigative practice

Isolating perpetrator-specific knowledge

Within the volume of queries, those linked to perpetrators must be reliably identified. This is usually possible via temporal correlation. Queries about future, unsuspected acts imply prior knowledge or expectation and allow inferences about the origin of persons.

Hypothesis testing

Most analyses begin with the formulation of a hypothesis to be either refuted or substantiated. With potentially conspicuous data, a considerable share of the time must be invested in identifying alternative explanations. Only if this is not possible can the data finding serve as a starting point for further investigation.

Intended use

Findings derived from Google Trends can only provide one partial aspect of comprehensive investigative work. They are one piece of a larger puzzle and can help make investigations more focused.

Circumstantial indications, not proof

"The indications derived from Google Trends cannot provide proof in the classical sense. They can only raise questions that may contribute to informed decision-making during an investigation."

Ethics and data protection

Responsible handling of digital data is essential. All forensic analyses observe applicable data-protection laws and ethical principles. The evaluation of publicly accessible data serves to understand social phenomena — never to undermine personal rights.

All steps in the work are oriented toward maximum transparency, fairness and scientific rigor. Analyses are carried out at an aggregated, anonymized level.

About the author

Steven Broschart has been working intensively with Google since 2003. He has been using Google Trends data since 2006. Since 2018, he has focused on forensic evaluation, in which digital traces are matched against real-world events. He supports German law-enforcement agencies in complex cases.